3: Alice in Wonderland

Follow the white bunny

The biggest drop at CES this year was from newcomer rabbit.tech bringing down the house with the memeworthy teenage engineering designed R1. An AI Companion, a new category of device with an action capable AI assistant able to act as a master controller to other apps.

The everpresent undercurrent of Hackernewsy Luddism of the tech world required a number of artful backbenchers to ask:

is it a phone? (Yes, it seems to be)

why is it not an app? (It breaks the siloed app security model by spoofing the human user)

why would anyone carry and recharge another device? (You carry and recharge your vape pen… so really the question you’re asking is: Is it addictive enough to bother)

why would any sane person do hardware ? (Multi trillion dollar market size if they get it right, plus if you need to break the app security model, you have to get out from under the thumb of the enforcers)

Founder Jesse Lyu, is a 2 time YC veteran (W15 an W18), sold his first hardware startup to Baidu and sits on the board of teenage engineering.

Rabbit joins the list of AI hardware startups, which at this point includes human.e, Avi Schiffman’s Tab, David Siroker’s Rewind Pendant etc.

The key question everyone seeks to answer is can we actually build a an AI companion that is both helpful and harmless and easy to use and not hackable at the drop of the proverbial rabbit into the hat.

Full CES Preso drop here

Augmenting the white coats

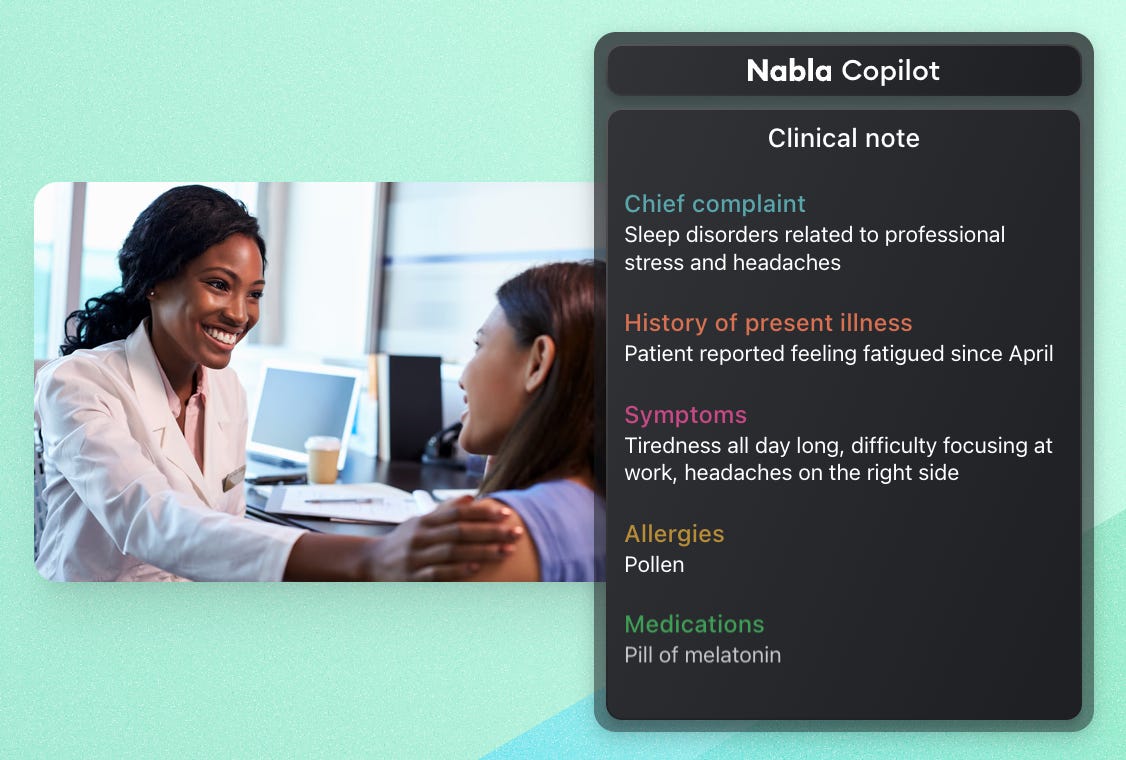

Intelligence augmentation, proceeds apace, with an excellent entry from former Meta FAIR researcher Alexandre Lebrun and the Nabla Medical Copilot. Nabla is the holy grail, a medical transcription assistant that is completely passive during the initial physician consultation, only requiring doctor attention to approve the final record of the visit.

Given that the number 1 complaint of any attending today is having to do data entry into the corrupt design by committee mess that is the Epic EHR, any improvement here would be a godsend for productivity in a sector that comprises 18% of the US economy.

Nabla uses:

Off the shelf MSFT Azure and fine tuned OpenAI open source Whisper speech to text models

GPT-3, GPT-4 and Llama 2

and no doubt a bunch of middleware to parse, categorize and fill in the blanks on a typical Electronic Health Record

It is also notable that Alex’s career has been so focused on allowing humans to communicate with machines, from a stint at Nuance, then founding Wit.ai to allow developers to code simple chatbots, acquired by Facebook, and now finally Nabla.

Or Replacing Them

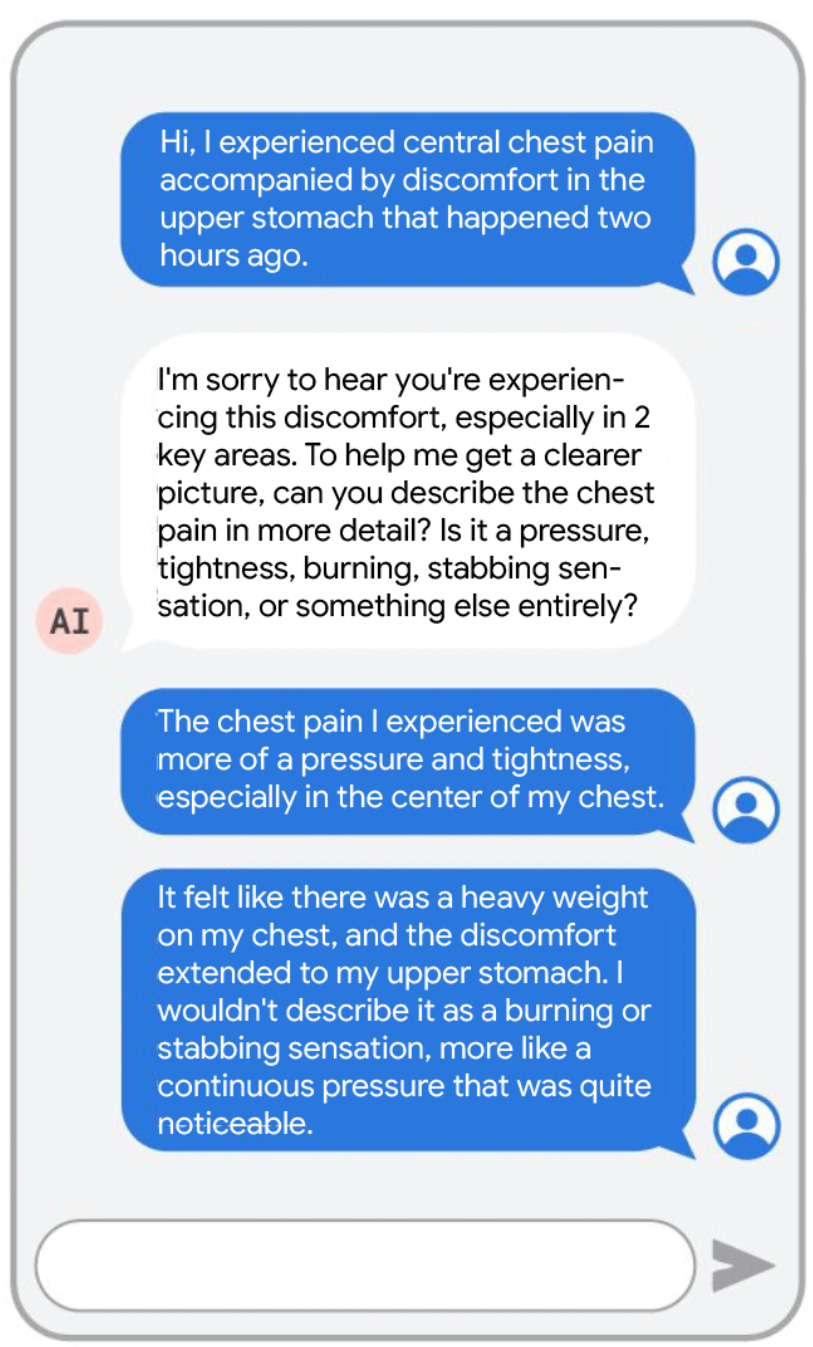

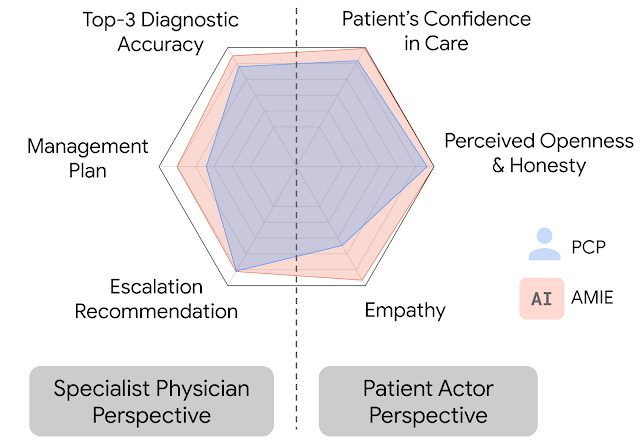

Google’s Research team coded up a patient facing diagnostic chatbot, AMIE:

That subsequently outperformed human physicians on every metric in a simulation

Some nice charts here.

We definitely hope that this research can be translated some day into real world useful services, but are sometimes dumbfounded at how many hurdles remain. We counted the number of languages offered by Kaiser Permanente in Northern California (22, including Navajo and Mien, neither of which are offered by Google Translate), and do wonder if US hospitals would require patient facing bots to be as facile as humans.. we would guess.. yes?

Zero to 100° Celsius in 40 seconds

Sam D’amico has updates on the induction stove the team at Impulse Labs have cooked up. In summary, it:

replace nasty gas stoves

with awesome induction stoves

which had a bad name for a number of reasons, which the Impulse team solved

slow heating, this was due to energy density limited to 120 V draws, which they solved with a battery a quarter the capacity of a Tesla Powerwall

warped pans from overheating, solved with temperature sensors and fine electronic control of heat delivery

It’s a 6 grand outlay before up to 45% in federal subsidies, and they’re taking orders now. More on the product development here

Things Happen

Andrej Karpathy says IA (Intelligence Augmentation) over AI

Sam Altman tells YC W24 kickoff “build with expectation GPT-4 shortcomings will be resolved with GPT-5, and AGI is around the corner”, this news comes third hand from a deleted tweet from Richard He by way Howie Xu… Give it what credence that you would gossip about any horse in a race.

The Macbook M3 Max and Apple’s open source machine learning library MLX, means Macbooks are now very fast at inference.

This is the third edition of Self-Aware Neuron! A weekly narrative recap, where I pull together the threads of the week to form a coherent picture of the acceleration. I need feedback! Do you like the videos? Can you see them ok? Follow the Substack link to leave comments!

videos are fine, tuned to the week feed.

The paradigm is redraw of hardware and i see that the mark of language will fate even if as IA (Intelligence Augmentation) can't cooperate with AI.

As the new class of automatons there is this: "and do wonder if US hospitals would require patient facing bots to be as facile as humans.. we would guess.. yes?" as sure inhuman patience towards survivability and not hurried survival mode.